Whoa! Your next MacBook could have eye and hand-tracking

Go all 'Minority Report' on your MacBook!

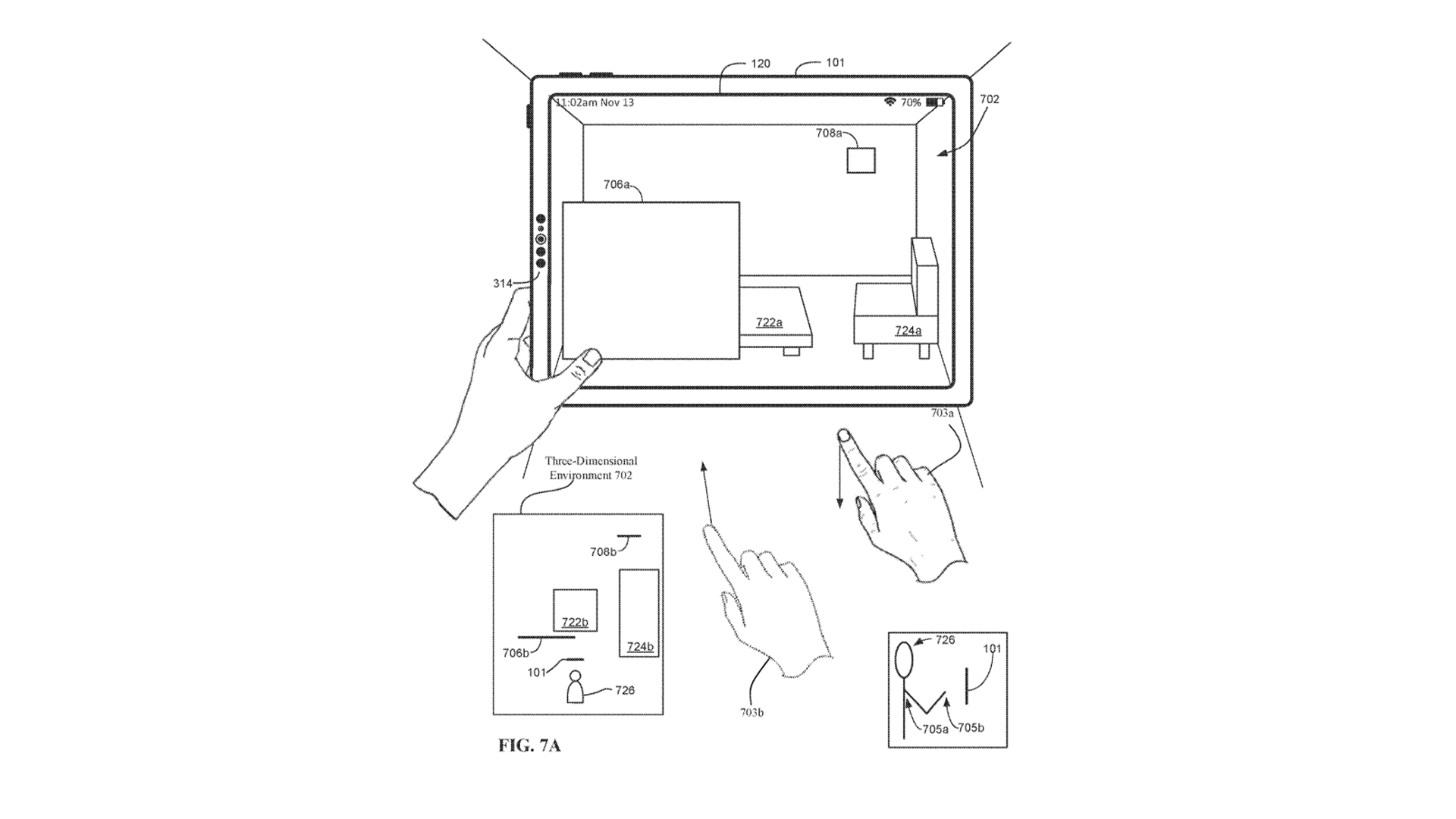

Could the next generation of MacBooks and iPads give you the ability to interact with 3D environments via advanced eye and hand-tracking technology? That’s what Apple’s latest patent suggests.

Sure, the illustrations focus on an iPad, but the patent also discusses using this tech in Apple’s XR headset, iPhones, and most interestingly to us, on a MacBook. That means Apple could finally be on the verge of unlocking additional user interfaces for its laptops beyond the standard keyboard and trackpad.

How would it work?

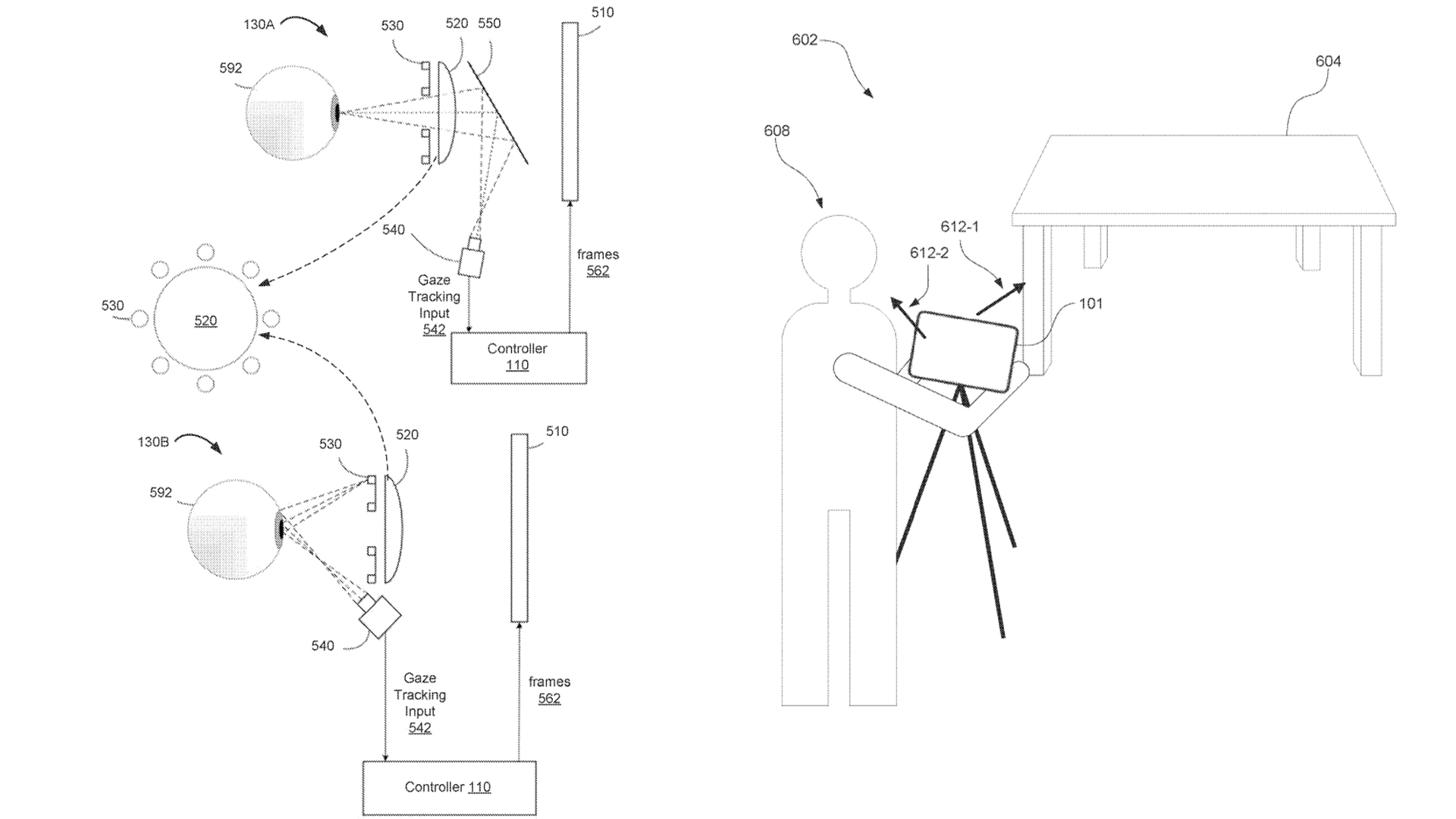

Based on the patent, published today by the US Patent & Trademark Office, this looks to be the next logical step in Apple’s AR aspirations which have progressed slowly over the last few years. But the ways the company has tried to tackle this feature are wide-ranging.

This paperwork primarily tackles some of the key issues that come with the current crop of eye/hand tracking tech, including the cumbersome lack of feedback for interacting with virtual objects, the sometimes error-prone interaction with virtual elements, and the often convoluted button combinations required to interact with said elements. With this in mind, Apple is looking to streamline and simplify the whole process.

One thing is for certain across all of them: eye tracking is done through detecting and following your pupils, which is then used to simulate this 3D virtual environment in front of you.

As for manipulating the 3D items in front of you, Apple’s covered a lot of bases here with several options:

- Your hands via a camera and depth sensor

- A hand-tracking device/motion sensor

- A touchscreen display or the trackpad

- A wearable smart glove

- Or a remote control/stylus-esque device

Outlook

If there is one feature Mac users have been craving over the past several years without a peep from Apple, it’s touchscreen interactivity. This patent suggests Apple could skip that step and it provides a very interesting future for AR development and interactive 3D content.

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

The various input methods do drum up a little concern for me — I’d prefer a camera/depth sensor method over wearing a glove. And one thing that isn’t mentioned anywhere in this patent is whether this would be complemented by 3D display tech like the Asus ProArt StudioBook. It seems logical there would be one given the eye-tracking capabilities!

Of course, this is a patent — not a guarantee of a new feature. But it is certainly proof that Apple is thinking about it, and that’s enough to get pretty hyped about what is happening in the depths of that UFO campus.

Jason brought a decade of tech and gaming journalism experience to his role as a writer at Laptop Mag, and he is now the Managing Editor of Computing at Tom's Guide. He takes a particular interest in writing articles and creating videos about laptops, headphones and games. He has previously written for Kotaku, Stuff and BBC Science Focus. In his spare time, you'll find Jason looking for good dogs to pet or thinking about eating pizza if he isn't already.