How Personalized Spatial Audio actually works — how to use the AirPods’ best feature

Let’s clear some things up about Apple’s Personalized Spatial Audio

Personalized Spatial Audio is a much talked-about feature of Apple’s AirPods line, especially the AirPods Pro 2. But how does it actually work?

Because I know the company has been quick to brag about what spatial audio does — using the accelerometer and gyroscope in your iOS device, alongside tiny adjustments of audio frequencies in each ear to simulate a sphere of sound around you, which changes as you turn your head.

But the more intriguing questions about personalizing this spatial audio are a little trickier to answer. What is Apple looking for when you use your iPhone’s True Depth camera? What differences does this actually make? And is that difference significant? After a catch up with Apple, I have a few answers on how Personalized Spatial Audio actually works, and you’ll also find a quick guide on how to set it up.

Features: Apple H2 chip, Voice cancellation, Personalized Spatial Audio, hands-free Siri, 1P54 dust, sweat, water-resistant

How Personalized Spatial Audio works

We’ve seen a lot of different explanations across various sites about how Personalized Spatial Audio works, including it scanning the inside of your ear for details on how to provide a better spaciousness around your ear.

The reality is a little simpler than that, as Apple confirms that your Personalized Spatial Audio profile is based on scans of your head, which takes into account the geometry of your head and the position of your ears.

With this information, Apple is then able to cater the spatial audio “sphere” of sorts around your head to act more realistically to where your ears are placed. Put simply, everyone’s ears are different and there is no such thing as a symmetrical pair of ears.

So, the scan will establish what particular angle the audio will enter your ears and cater the mix to it. As for any changes to the sound happening within your ear, this is done via the adaptive EQ.This uses the microphones placed on the part of the buds that goes inside your ear to tune the levels for a more consistent listening experience that is true to the original mix, regardless of how big or small your ear canals are.

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

How to setup Personalized Spatial Audio

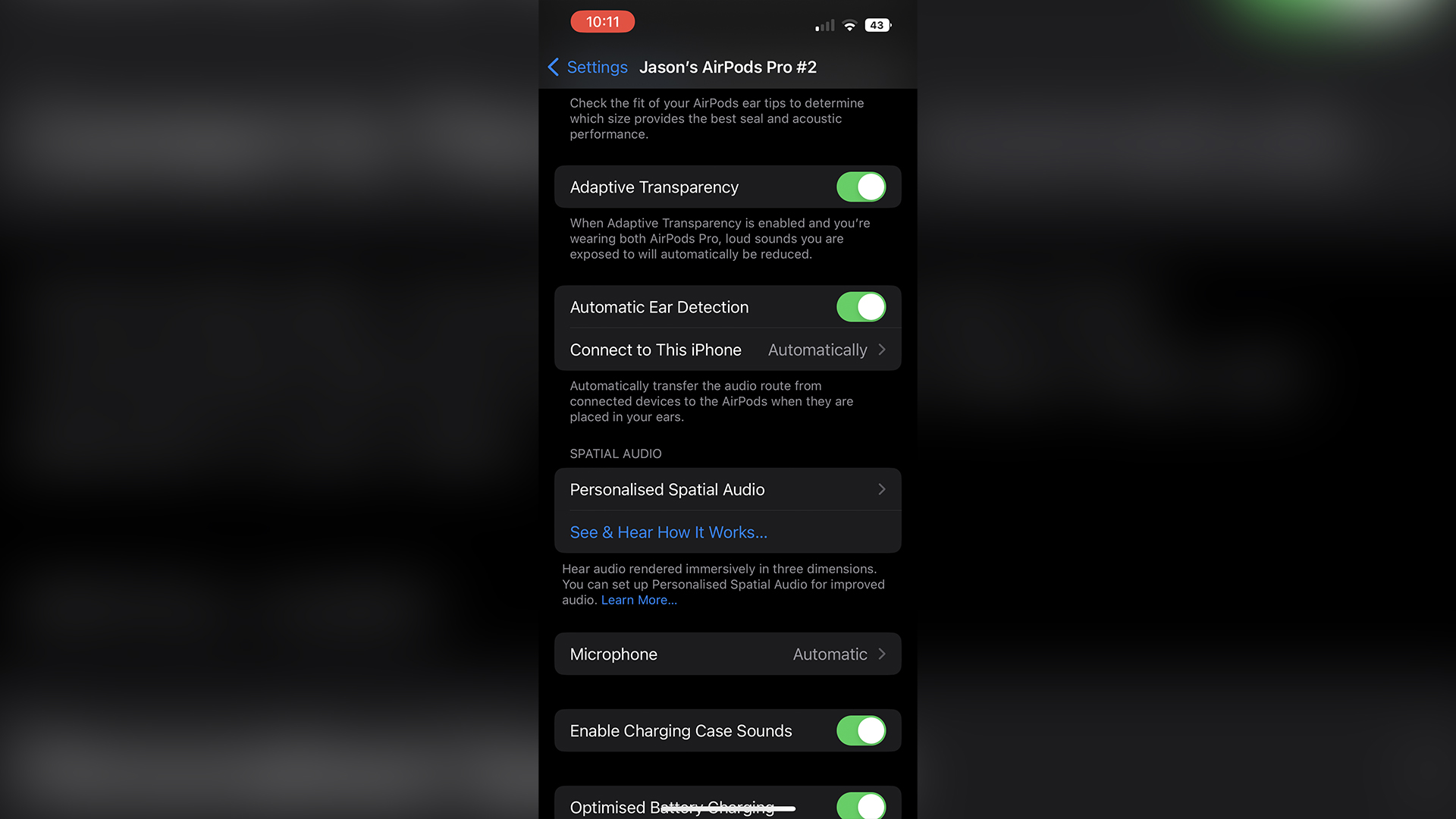

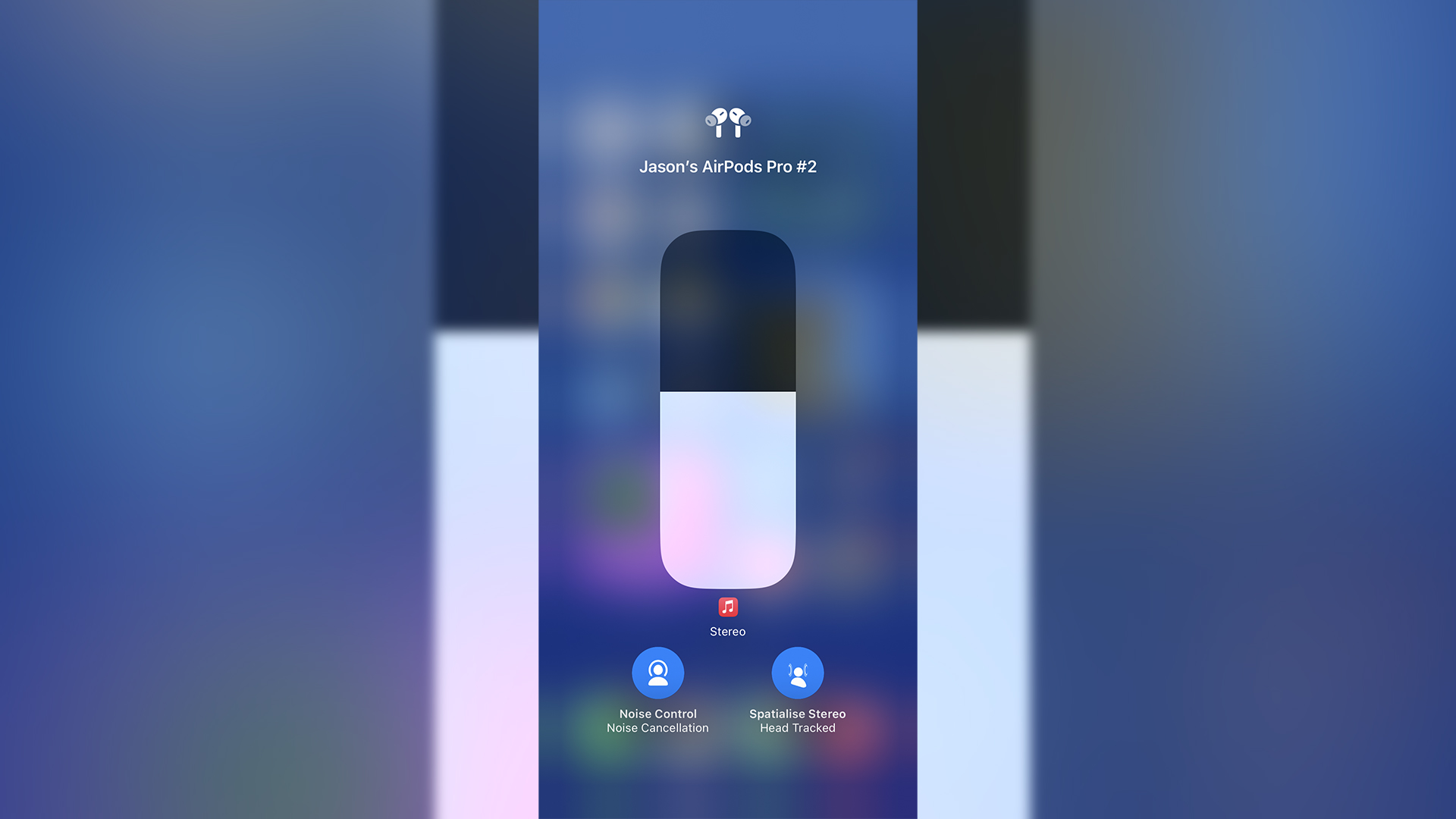

1. Head over to Settings and you’ll find your earbuds now have their own dedicated tab at the top of the list, rather than having to dive into the Bluetooth list. Tap on it and select Personalized Spatial Audio.

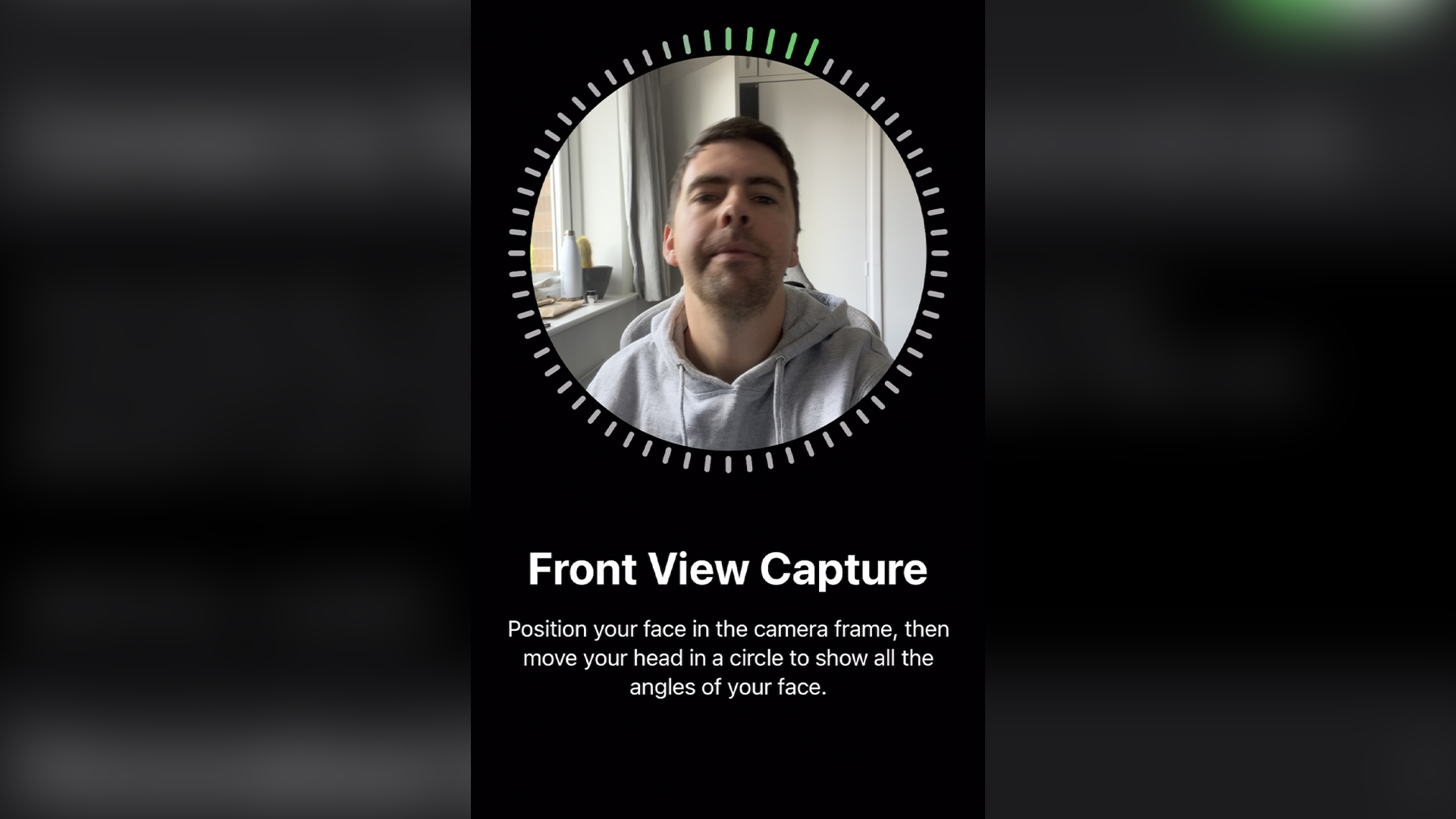

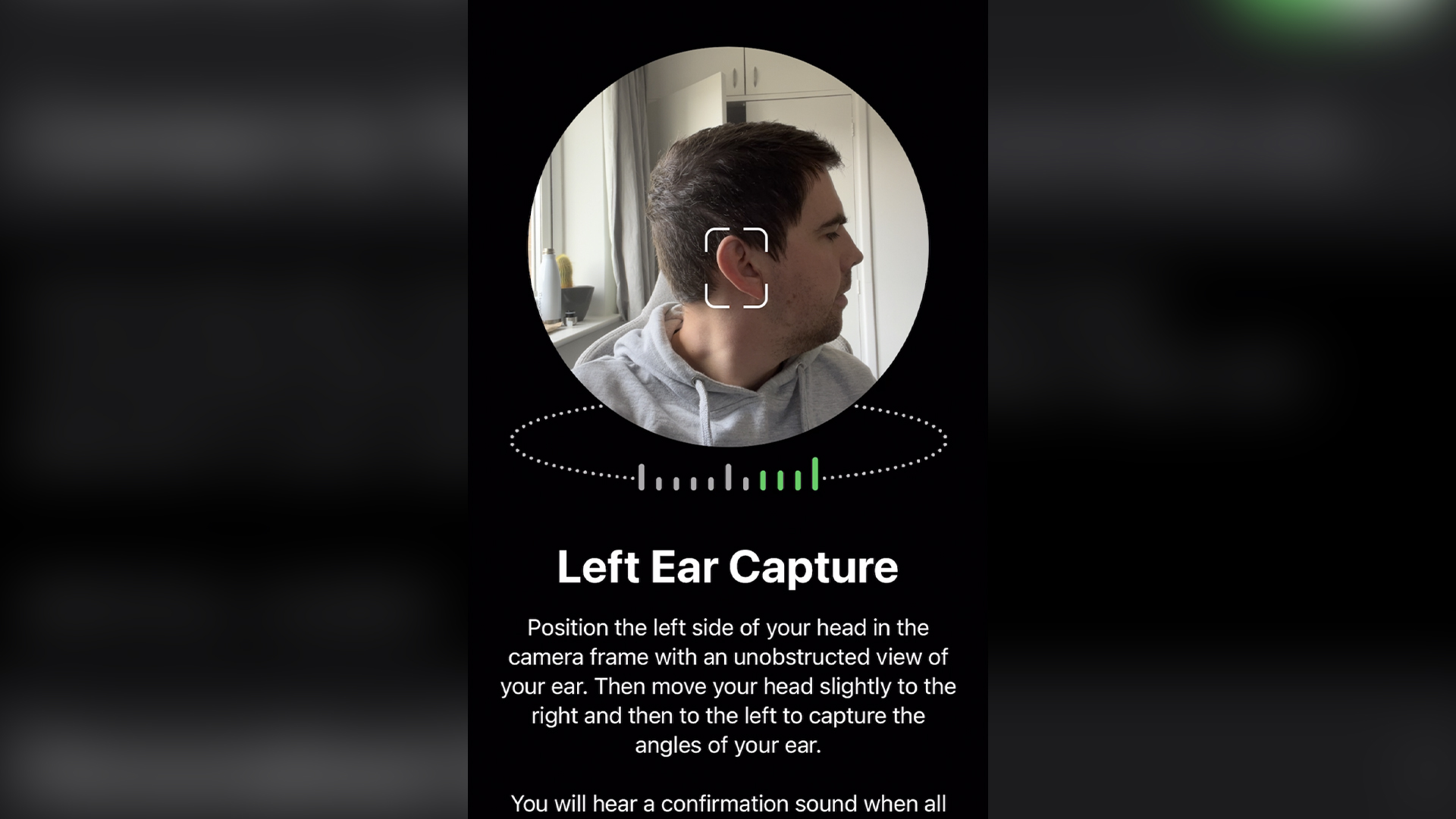

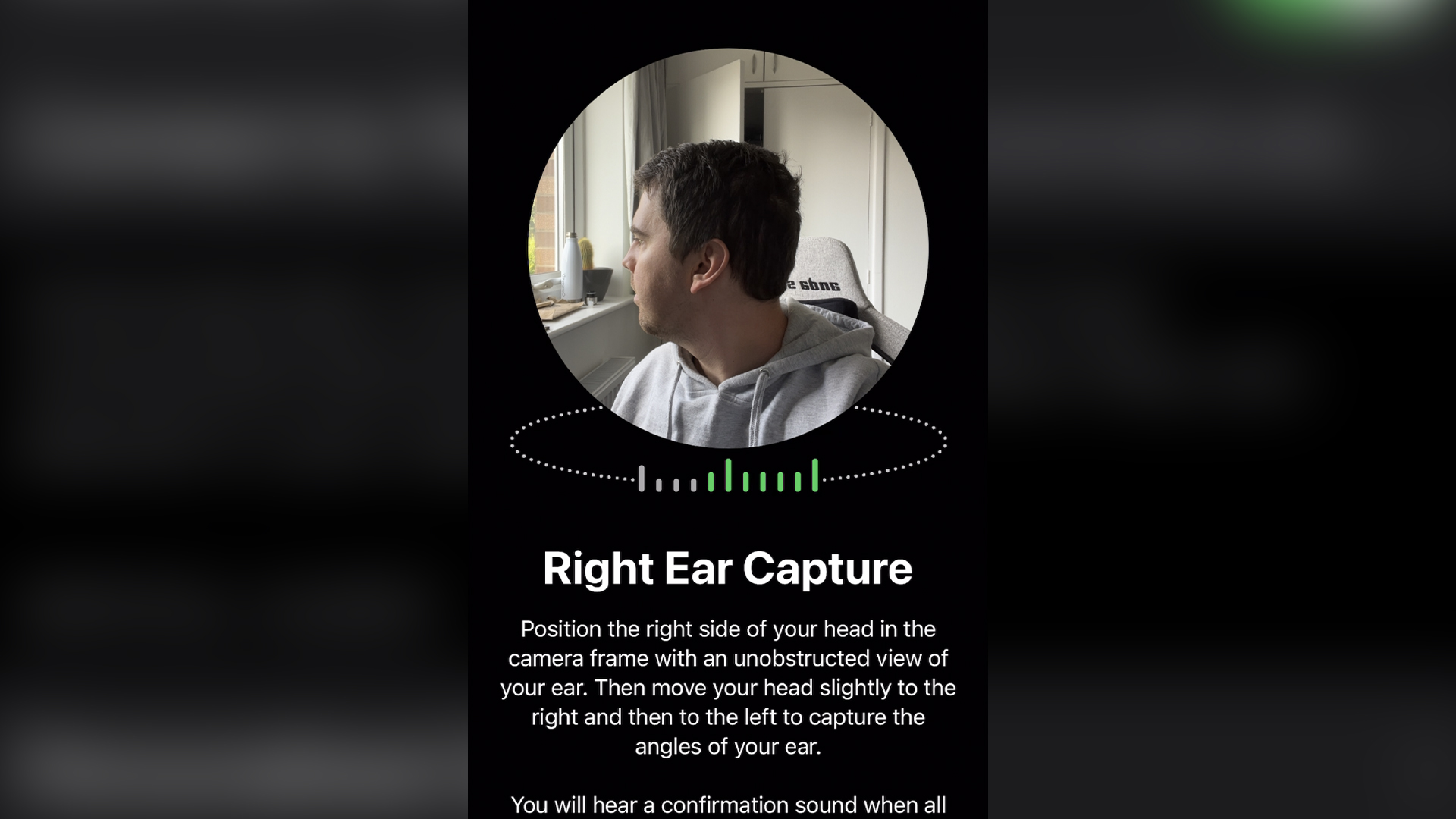

2. Your iPhone will then take you on a guide of using the True Depth camera across three areas — the front of your head (your face), your left ear and the lift side of your head, followed by the right. My recommendation would be to turn your head rather than move the phone. Doing that makes it quite a pain to get the right angle

3. Once done, that’s it! You have your own Personalized Spatial Audio profile. If you are concerned about privacy, Apple has confirmed to Laptop Mag that this data is anonymized and encrypted on your device.

It’s important to note that Personalized Spatial Audio only works on iPhones running iOS 16 with a True Depth camera (iPhone X and later). The feature is only available on the AirPods Pro 2, AirPods Pro, AirPods Max, AirPods 3, and Beats Fit Pro.

Does it make a difference?

Let’s be honest, for personal listening, spatial audio is a really cool party trick, but a party trick nonetheless. Head tracking is an especially cool feature that you’ll enjoy, even if it does make you look like a bit of a weirdo in public by turning your noggin around in circles while walking through the city.

Is there a difference between standard and Personalized Spatial Audio? Sort of, but it’s not a significant one. It took me a while to figure out what was different, but when activated, everything you listen to just feels a little bit wider.Each instrument has a stronger sense of placement within the space generated around you, which I think comes down to the more accurate head tracking that comes with tweaking the spatial audio to you.

While this is all really cool stuff, the question is obvious: will the honeymoon period end? Is this something that you’ll find genuine use and enjoyment for years to come, or will there come a time when people view it as pointless? Time will tell on that one.

Jason brought a decade of tech and gaming journalism experience to his role as a writer at Laptop Mag, and he is now the Managing Editor of Computing at Tom's Guide. He takes a particular interest in writing articles and creating videos about laptops, headphones and games. He has previously written for Kotaku, Stuff and BBC Science Focus. In his spare time, you'll find Jason looking for good dogs to pet or thinking about eating pizza if he isn't already.