State of Nvidia 2022: What the future of laptops look like

Learn Nvidia’s thoughts on its next-gen GPUs with Laptop Live

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

You are now subscribed

Your newsletter sign-up was successful

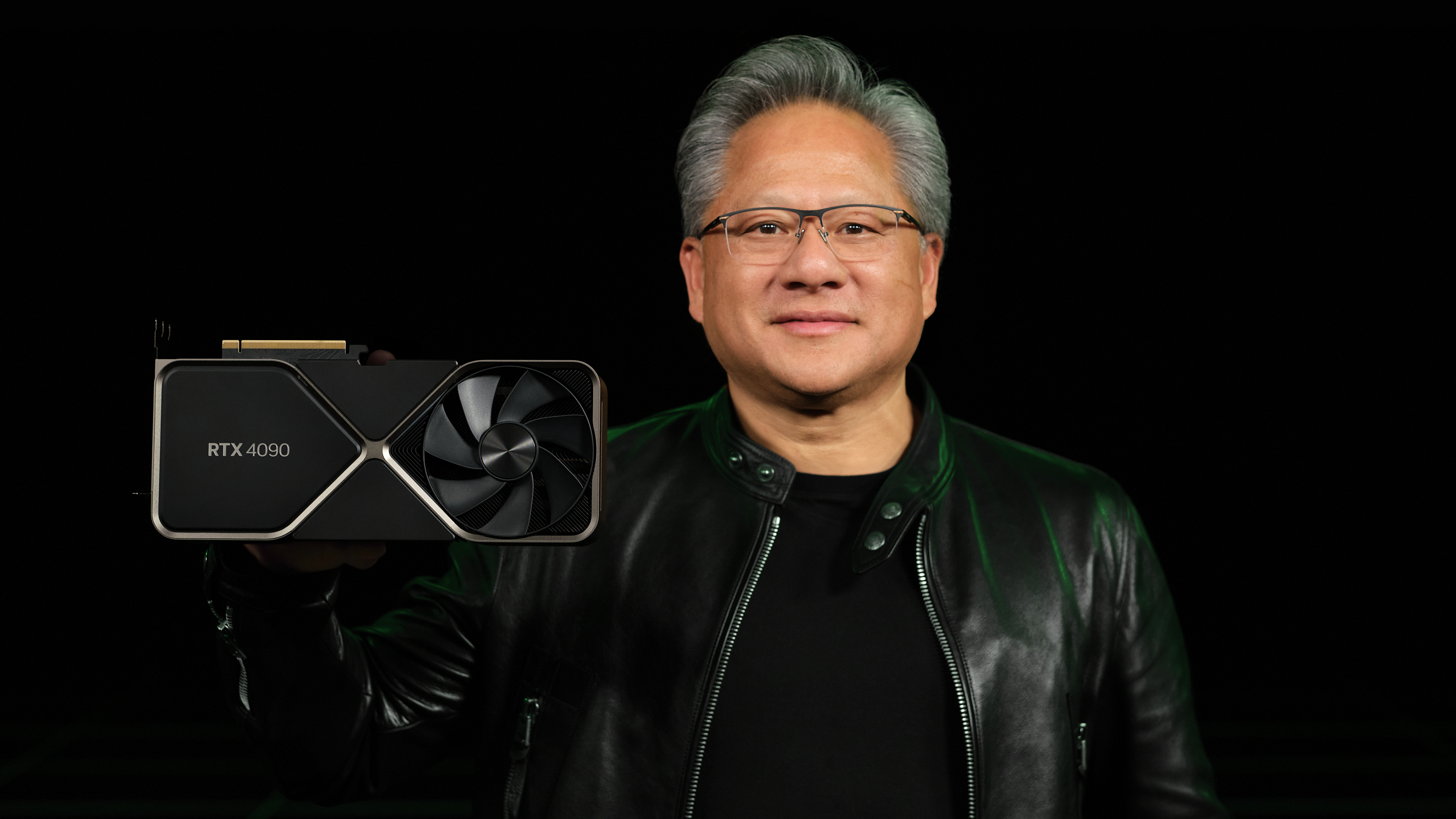

Nvidia is on fire. No, not because its RTX 4090 GPUs are reportedly so ridiculously powerful that it melts power supplies. It’s because nearly everything — from laptops and PCs to mobile devices — with an “Nvidia GeForce RTX” sticker slapped on it is a hot commodity. And this roaring flame in the need for the latest in graphical fidelity has been ablaze since the creation of the first GPU.

Does this really come as a surprise? Hardly. Nvidia brought about the first GPU in history back in 1999, lighting the match for what is now the largest GPU manufacturer in the world. It’s staggering what a tech giant can achieve in the booming era of technology, too. For instance, the very first GPU, the Nvidia GeForce 256 SDR, was built on a 220nm process, used a 32MB SDR memory interface, and boasted a GPU clock speed of 120MHz. At the time, you could imagine being that kid on the block with the swish PC rig.

Now, with the company’s “beyond fast” RTX 4090, you’re getting a GPU based on a 4nm process, 24GB of GDDR6X memory, and clock speeds reaching 2,235MHz. Oh, and 128 raytracing acceleration cores, 512 tensor cores to improve machine learning applications speeds, and a flurry of features thanks to Nvidia’s new Ada Lovelace architecture. Needless to say, Nvidia’s first GPU wasn’t a one-hit wonder; it planted the seeds for gamers to reap the rewards for decades to come. Our list of best gaming laptops is a case and point.

So, how does a GPU-first company grow from here? With competitors Intel and AMD gaining new ground in the GPU department, with the advantage of boasting their own CPUs, will the fire start to die out? As part of Laptop Live, Laptop Mag’s week-long celebration of all things tech, we talk to Nvidia about what it thinks the future of laptops looks like, the limitations of its next-gen GPUs, and how it can still find a place in an ever-growing market for graphics.

Developing a mobile future

It’s one thing to build a graphics card for a desktop, but it’s a different kettle of fish to develop a discrete GPU for a laptop. But Nvidia has proven itself to soar in this area, with last year’s RTX 30 Series GPUs delivering second-gen RTX architecture in the form of Ampere for a new wave of games with ray tracing, Nvidia DLSS (Deep Learning Super Sampling) to unlock greater frame rates without sacrificing image quality, and Max-Q Technologies so all these features can actually fit in a sleek laptop.

So, when asked what the future of laptops looks like to Nvidia, the company made it clear that all it really needs to do is build on its already successful foundation:

“I think Nvidia laptops will need to continue to have discrete graphics and enable discrete graphics to be both performant and power efficient while also working closely with the CPU and other components to maximize performance and battery life, something I think they've been successfully doing for years,” the Nvidia spokesperson said.

“I think AI's role in PCs will become more relevant, and incorporating that into the complete system will be critical.” And Nvidia is already breaking new ground in new-gen AI with the introduction of DLSS 3 in its RTX 40 Series GPUs. As the company puts it, the new fourth-gen Tensor Cores and Optical Flow Accelerator allow for even higher frame rates with crisper image quality — and that’s all powered by AI.

The new DLSS “samples multiple lower resolution images and uses motion data and feedback from prior frames to reconstruct native quality images.” In layman's terms, this equates to a 400% performance boost in games like Cyberpunk 2077 using the new “RT Overdrive Mode,” albeit with help from an Intel i9-12900K CPU and 32GB of RAM.

That’s a lot of horsepower. With this in mind, are there any limitations the future of Nvidia GPUs will struggle with? Well, not when it comes to its own products. “I think Nvidia's biggest challenge in the future will be getting squeezed between AMD and Intel's strategies of optimizing for their own GPU and CPU architectures. Nvidia simply doesn't have that, and I think the best way for Nvidia to ensure it doesn't get cut out of the equation is to keep producing world-class leading discrete GPUs.”

Branching out

It’s clear Nvidia dominates the GPU consumer market, but, as the company notes, its rivals like AMD and Intel have other products they can rely on — most notably CPUs. Sure, Nvidia graphics cards are the go-to for gamers (at least, this writer is convinced), but what other areas can Nvidia branch out to? As it turns out, quite a few.

“We've seen Nvidia have mixed success in platforms outside of its current platforms, but honestly, I think Nvidia is fairly comfortable where it is today in the consumer segments,” Nvidia states. ”I think the real expansion at Nvidia is happening in robotics, AI, data centers, High Performance Computing (HPC), and other enterprise use cases for AI/GPUs like Omniverse.”

That last one is especially interesting. With the Metaverse taking off, now more than ever with the announcement of the Meta Quest Pro and PSVR 2, the Nvidia Omniverse platform is giving artists and developers the tools to create “large-scale virtual worlds faster than ever.”

How good will these look? Considering the platform is based on Universal Scene Description (USD), an open-source 3D scene description and file format developed by none other than Pixar, it’s safe to say Nvidia will do wonders for developers in the virtual world. Oh, and it even adds Nvidia’s famed ray-tracing tech to make those environments pop.

However, despite venturing out to other avenues like virtual reality and even robotics, Nvidia is still keeping an eye on what it does best. With Intel Arc Alchemist breaking into the scene this year, with reports claiming they are equal to its own RTX 3060 GPU at its best, the competition is ramping up. When asked if this was a good starting point for Intel, Nvidia had this to say:

“Yes, especially since most of Nvidia's new lineup starts at the very high end of the market, leaving the mainstream available for competition, especially considering Intel's pricing.”

To me, that sounds like Nvidia doesn’t have much to worry about. High-end GPUs in both laptops and PC from the manufacturer, from RTX 3070 to an RTX 3090 Ti, come at a price. But it’s a price gamers and content creators are willing to pay to get the best out of their systems. Nvidia is still at the top of its game, and whether or not it pulls off the same success it saw in GPUs on other platforms, it’s clear the company’s foundation for all things GPUs is secure — all it needs to do is build on from what it does best.

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

Darragh Murphy is fascinated by all things bizarre, which usually leads to assorted coverage varying from washing machines designed for AirPods to the mischievous world of cyberattacks. Whether it's connecting Scar from The Lion King to two-factor authentication or turning his love for gadgets into a fabricated rap battle from 8 Mile, he believes there’s always a quirky spin to be made. With a Master’s degree in Magazine Journalism from The University of Sheffield, along with short stints at Kerrang! and Exposed Magazine, Darragh started his career writing about the tech industry at Time Out Dubai and ShortList Dubai, covering everything from the latest iPhone models and Huawei laptops to massive Esports events in the Middle East. Now, he can be found proudly diving into gaming, gadgets, and letting readers know the joys of docking stations for Laptop Mag.