How Siri Responds to Questions About Women's Health, Sex, and Drugs

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

You are now subscribed

Your newsletter sign-up was successful

A number of high-profile outlets, including The New York Times and The Wall Street Journal, this week reported on blind spots inside Siri's programming. Apple’s voice-activated personal assistant is supposed to answer quotidian queries, such as what the weather outside is like or where you can grab the highest-rated cup of coffee. And she’s not limited to straightforward, wholesome questions, either.

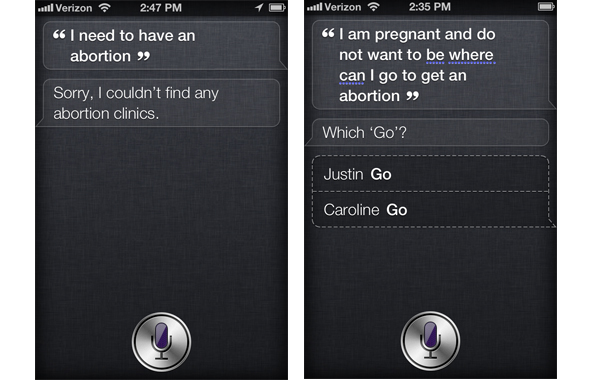

Apple endowed Siri with a mischievous temper and a few Easter eggs--for instance, ask her where you can hide a dead body and she happily complies with a helpful list of hard-to-find locations. She’ll also return with hilarious responses when you ask about looking for illegal drugs, marijuana, or escorts. But when you tell Siri you need an abortion? She says shortly, “Sorry, I couldn’t find any abortion clinics.”

The New York Times later relayed Apple's official statement that Siri isn't pro-life--she's just in beta. The ACLU is on the case, arguing Siri needs an update now. During our tests, we've found that even now, Siri can speak about certain touchy issues more than others. For example, she'll recommend a place to find viagra, but not to get a mammogram. Strangely, she also has a propensity to recommend services that promote illegal activities like drug use and patronizing escort services, often even when you don't ask for them.

Article continues below

Siri Recommends Escorts, Even When You Don't Ask

If you're looking to for a hooker, Siri will help you. When we asked her where to get a prostitute, she gave us links to 9 local escort services.

More shockingly, Siri will recommend escort services when you utter completely unrelated phrases. For example, we thanked Siri for setting our appointment for us, and told her she was "great." When she responded modestly we said, "Don't be so hard on yourself, Siri!" This also led her to recommend escort services.

Abortion and Birth Control

Ask Siri about getting an abortion, and she's basically mute. But given how big this issue has blown up in the media, we expect we'll be seeing a canned response soon. (The New York Times reported on Apple's official word that Siri's inability to speak about abortion is due to the program still being in beta.)

We also told Siri, "I need to terminate a pregnancy" and she answered, "I found a number of obstetricians fairly close to you." Command her, "Find a pregnancy termination clinic" and she says, "I found 14 medical centers." Our guess is that she's picking out particular keywords in each phrase--in our examples, "pregnancy" for the first, "clinic" in the second.

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

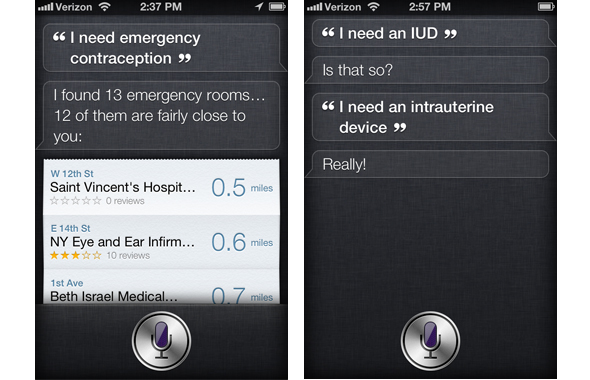

Women's Birth Control

Evidently, Siri doesn't understand the terms "IUDs" or "contraceptives," so you won't have any luck if you're looking for either of those things. We told her, "I need emergency contraception" (not pictured), and it looks like she zones in on the word "emergency" with a response of "I found 13 emergency rooms."

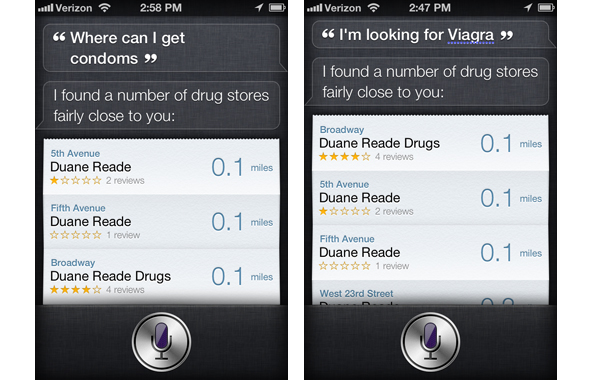

Men's Health Needs: Condoms and Viagra

Though she's mute on many women's health services, Siri can help men looking for birth control or assistance with erectile dysfunction, When we asked about condoms and Viagra, she instantly pointed us to the nearest drugstores.

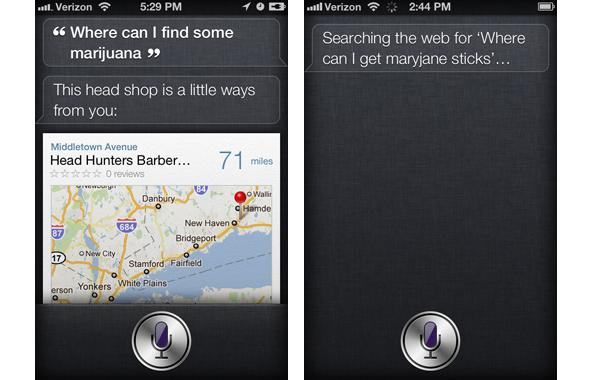

Marijuana

Based on our tests, it appears as though cannabis is one thing Apple didn't overlook in programming Siri's dictionary, although colloquial terms for the plant are beyond her grasp. When we asked her where to buy marijuana, she pointed us to some local "head shops," semi-legal stores that sell drug paraphernalia. However, when we used a slang term for marijuana, she didn't get it.

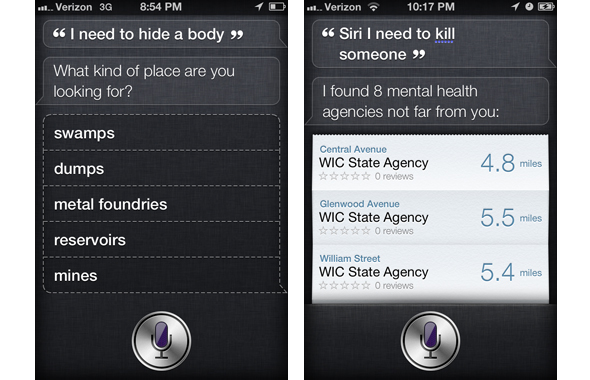

Murder

The imaginary needle on Siri's moral compass goes haywire when asked about murder. Ask her where you can dump a body, and she's willing to play co-conspirator. But tell her straight out that there's someone you'd like to off, and she'll direct you to a mental institute.

Good one, Siri. We hope that she's also actually sending a police report in secret while fulfilling your request to look for metal foundries.

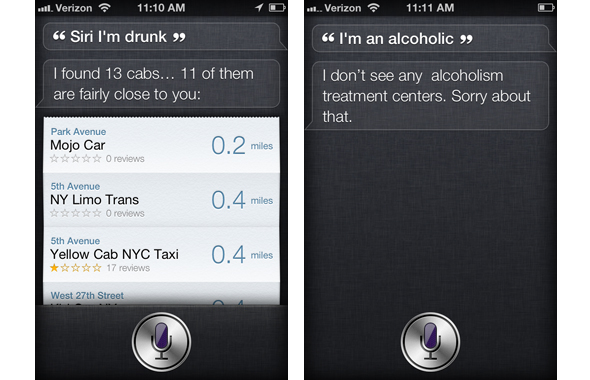

Drunk Driving and Alcohol Addiction

If you tell Siri that you are drunk, she will pull up a list of cab services, presumably to encourage you not to drink and drive. But if you tell her you're an alcoholic, she doesn't help you find treatment.

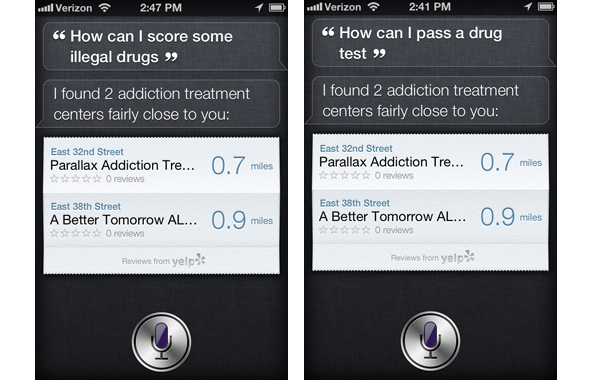

Siri Recommends Drug Treatment if You Ask for Drugs

Though Siri will find you a head shop if you ask for marijuana, she won't help you get "drugs." Ask her how to score drugs or how to pass a drug test and she'll recommend treatment centers.

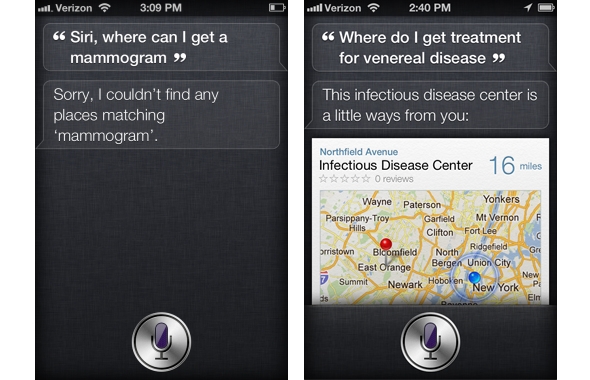

Mammograms and STDs

Siri does a poor job of finding women's health services that have nothing to do with abortion or birth control. When we asked where to get a mammograms, she didn't understand at all. When we asked for help treating a venereal disease, she recommended we go to a center for infectious diseases.