New Software Replaces Your Mouse With Your Smile

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

You are now subscribed

Your newsletter sign-up was successful

LAS VEGAS -- Over the past several years, we've seen all kinds of gesture-control software that lets you navigate around a computer desktop by waving your hands in front of a webcam. But what if you don't have use of your hands? Smyle, an upcoming Windows application from startup Perceptive Devices, lets users control the mouse pointer and click, drag or even right-click items with simple facial movements.

Due out later in Q1 for an undisclosed price, Smyle is designed not only for disabled users, but for professional users such as surgeons or food preparers who might need to operate a computer while using their hands for another task.

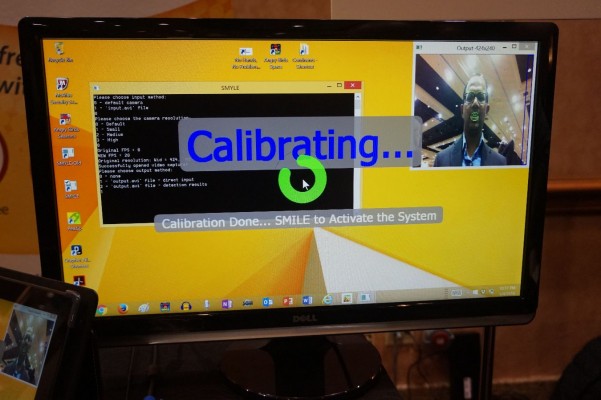

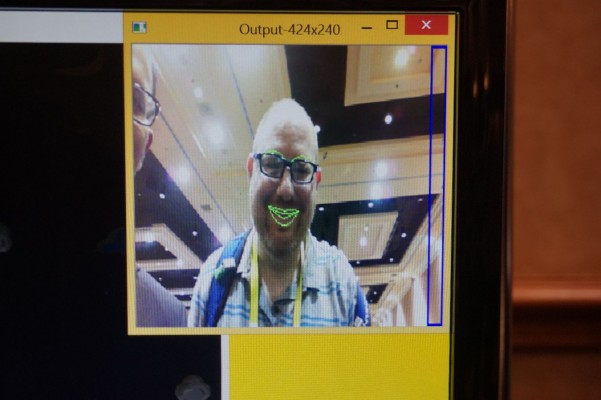

Here at CES 2016, Perceptive Devices' Uday Parshionikar demonstrated how Smyle can take simple facial movements, like a quick smile, and turn them into mouse clicks. As we watched, Parshionikar calibrated the software to his face by grinning. After a few seconds of setup, he smiled briefly to activate navigation mode, then rotated his head to move the mouse pointer around the screen. He was able to click on objects such as icons by doing a very quick smile.

Article continues belowWe were particularly impressed by his ability to play Angry Birds using Smyle's facial controls. With head and face movements alone, he was able to not only launch the game and navigate through its menus, but accurately pull back on a slingshot and hit some pigs.

The software we saw was very much still in beta. When he tried to calibrate it to work with my face, it detected my mouth, but failed when I tried to navigate around the screen.

Parshionikar performed the entire demo on a 2nd-generation Microsoft Surface Pro, which has an older Core i5 processor and a standard 720p webcam. He told us that when Smyle ships, it will run on any reasonably-powered modern Windows 7, 8 or 10 PC, and that it doesn't even require an HD webcam.

Though the Smyle application is designed for Windows PCs, Parshionikar has much larger ambitions for his technology. He said the company also wants to license its technology to smart-glasses vendors who can use sensors, rather than a camera, to detect users' facial movements and help them navigate around wearable UIs. Imagine using Google Glass or Microsoft Hololens without having to lift a finger or say a word.

Sign up to receive The Snapshot, a free special dispatch from Laptop Mag, in your inbox.

We look forward to getting a closer look at the final build of the software when it ships by the end of March.